Constant Literals in Objective-C

Starting with 2014, when Swift was announced, Objective-C material has been sparse in WWDC sessions, release notes, and new features . However, almost every year, there has been something new that makes life a little easier for those of us still using the language. WWDC 2021 is no exception. This year, clang has gained a new Objective-C feature called Constant Literals. In this post, I’ll explain what Constant Literals are and how to use them, along with some nice extra functionality added to the command-line plutil to work with them.

String Literals

For as long as I’ve been writing Objective-C, NSString literals have been available. You can include a string in your program by surrounding the text of the string with double quotes prefixed with an @ symbol:

NSString *name = @“Andrew”;

[self doSomethingWithAString:@“I’m a string”];

Strings created this way are stored in a TEXT section of the compiled binary. The compiler automatically uniques them, ensuring that there’s only one instance of a given string, no matter how many times it appears in the source code.

Strings created this way are considered static expressions and can be used to initialize global variables. This is used very frequently in Objective-C, including in numerous places in the Cocoa frameworks. One common use case is to define globally-visible notification name constants:

static NSString * const CustomNotificationName = @"CustomNotificationName";

Other Object Literals

In 2012, with the release of Xcode 4.4 and LLVM 4.0, Apple introduced Objective-C literals for three more common Objective-C types: NSArray, NSDictionary, and NSNumber. The syntax for them is similar to the syntax for NSString literals.

NSNumber *number = @42;

NSArray *array = @[@“Woz”, @“Steve Jobs”, @“Ron Wayne”];

NSDictionary *fruitsByColor = @{

@“red”: @“apple”,

@“yellow”: @“orange”,

@“purple” @“grape”,

};

Previous to this, instances of these classes could only be created with regular Objective-C initializer call syntax, which was much more verbose and harder to read. This was a really nice new feature, and is now taken for granted.

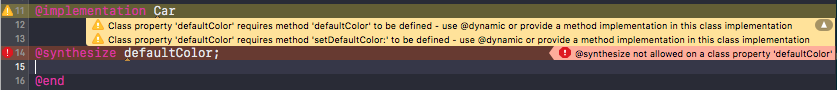

However, unlike NSStrings, these literals were just syntactic sugar for calls to the regular alloc/init methods at runtime, and as such they couldn’t be used to initialize global variables. Trying to do so would produce an error:

![]()

Objective-C (and C) require the expression used for the initializer of a global variable to be a compile-time constant. While this looks like a simple constant it’s really something like:

static NSNumber * const luckyNumber = [[NSNumber alloc] initWithInt:7];

At runtime, @7 ends up being a call to +alloc and +initWithInt: on NSNumber, and goes through the usual Objective-C message send machinery.

I’ve frequently wished I could initialize global dictionary, array, and number variables with literals in Objective-C, and have resorted to workarounds including class methods/properties to access a global that is initialized at runtime using dispatch_once. This works, but it’s clunky, requires a bunch of ugly boilerplate, more complicated code at callsites, etc.

Constant Literals in Xcode 13/Clang 13

Xcode 13 ships with a new major release of Clang/LLVM, version 13. New in this release is support for constant literals for NSNumber, NSArray, and NSDictionary. It can be turned on by passing the new -fobjc-constant-literals flag to clang on the command-line when compiling:

clang -fobjc-constant-literals -framework Foundation test.m

This option is also enabled by default in Xcode 13 so you don’t need to do anything to start using it in your Xcode projects.

With this feature enabled, you can create global constant variables that are instances of NSNumber, NSArray, and NSDictionary, including nesting these types.

The below example program includes three global variables: luckyNumber, favoriteFoods, and peopleByBirthMonth, which are an NSNumber, NSArray, and NSDictionary respectively.

#import

static NSNumber * const luckyNumber = @7;

static NSArray * const favoriteFoods = @[@"🍜", @"🍕", @"🍛", @"🍔", @"🍍"];

static NSDictionary * const peopleByBirthMonth = @{

@"February": @[@"Steve Jobs”],

@"August": @[@"Woz”],

@"October": @[@"Bill Gates”],

@"December": @[@"Ada Lovelace", @"Grace Hopper"],

};

int main(int argc, char *argv[]) {

@autoreleasepool {

NSLog(@"%@", luckyNumber);

NSLog(@"%@", favoriteFoods);

NSLog(@"%@", peopleByBirthMonth);

}

}

If you try to build this with an older version of clang or Xcode it will fail with the 'initializer element is not a compile-time constant’ errors described above.

However, you can compile it with clang version 13 like so:

clang -fno-objc-constant-literals -framework Foundation test.m

When you run it, you’ll get the expected output:

./a.out

2021-06-07 23:45:39.881 a.out[29750:5660400] 7

2021-06-07 23:45:39.881 a.out[29750:5660400] (

"\Ud83c\Udf5c",

"\Ud83c\Udf55",

"\Ud83c\Udf5b",

"\Ud83c\Udf54",

"\Ud83c\Udf4d"

)

2021-06-07 23:45:39.881 a.out[29750:5660400] {

August = (

Woz

);

December = (

"Ada Lovelace",

"Grace Hopper"

);

February = (

"Steve Jobs"

);

October = (

"Bill Gates"

);

}

Instances created using this new feature are stored as constants in the CONST section of the compiled binary.

The release notes also say:

When possible the compiler also converts statements that are similar to the above examples but are in a non-global scope.

I’m not sure what the criteria for this being possible are, but I take this to mean that even where these kinds of literals are not used to initialize global variables, ie. anywhere else they’re used in your code, the compiler may optimize them into the CONST section of your binary.

I’d need to investigate more to be 100% certain, but I believe that literals that are so optimized don’t participate in reference counting, the same way NSString literals don’t. This should result in small but potentially important performance improvements particularly in paths where many of them are quickly created and/or released.

Limitations

There are some limitations on the use of this feature. You can arbitrarily nest these types inside each other, however all of the instances in the hierarchy must be constant as well. This won’t work:

static NSDictionary * const heterogeneousDictionary = @{

@"key1": @"value1",

@"key2": @2,

@"key3": @(pow(2,2)),

};

You’ll get the following error:

a dictionary literal can only be used at file scope if its contents are all also constant literals and its keys are string literals

This is because pow(2,2) is not a compile-time constant.

Another requirement is that constant dictionaries can only have string keys. Unfortunately, even though it’s a relatively common pattern, you’re not allowed to use even constant NSNumbers as keys in a constant dictionary. This won’t work:

static NSDictionary * const gamesByReleaseYear = @{

@1985: @"Super Mario Bros.”,

@1989: @"Prince of Persia”,

@1991: @"Lemmings",

};

Even though all the literals in the hierarchy are constants, the keys must be strings, and trying to compile this will provoke the same error as above.

Code Generation

Say you have some structured data you’d like to include in your app. Traditionally you might put it in a plist then read that plist at runtime deserializing it into dictionaries, arrays, strings, and numbers. With the constant literals feature, it might be nicer to include it in code, since that way it can be included in your binary itself, potentially providing better performance than reading and parsing a plist at runtime.

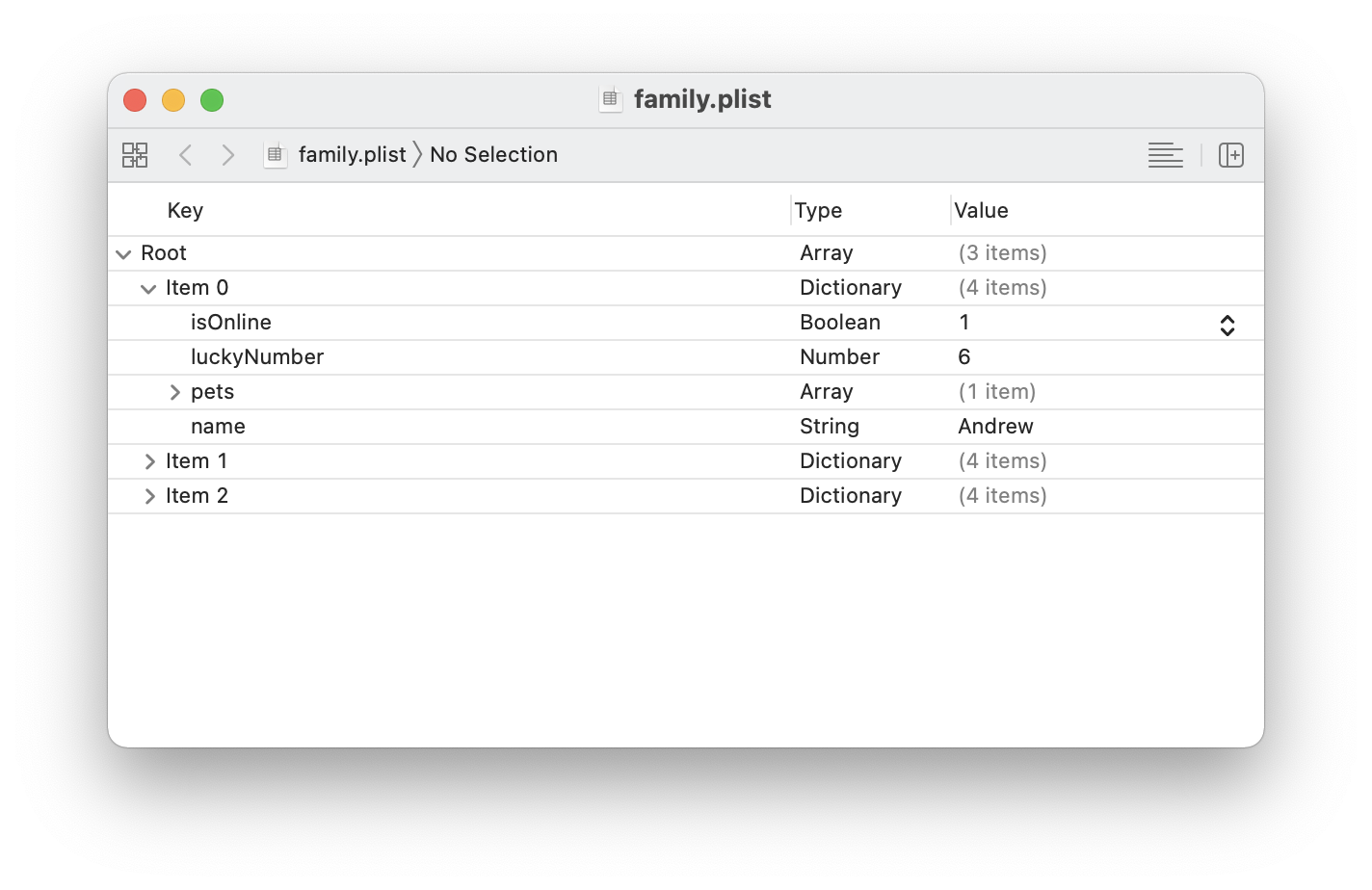

While you can certainly do this manually, Apple has updated the plutil command line tool included with Xcode so that it can create Objective-C source files containing constant literals from plist data. As you might expect, the plist can only contain arrays, dictionaries, strings, and numbers. Given a plist like:

You can convert it with plutil on the command line:

plutil -convert objc -header family.plist

This will create a Family.h and a family.m file in the current directory. (The -header flag is optional and tells plutil to generate both .h and .m files. Omit it and you’ll only get a .m file.) The .m file looks like:

#import "family.h"

/// Generated from family.plist

__attribute__((visibility("hidden")))

NSArray * const family = @[

@{

@"isOnline" : @YES,

@"luckyNumber" : @6,

@"name" : @"Andrew",

@"pets" : @[

@{

@"color" : @"white",

@"type" : @"dog",

},

],

},

@{ … },

@{ … }

];

(Some lines collapsed for brevity.)

You could even run plutil from a build phase script to regenerate constant literal code from the plist file(s) at build time.

Conclusions

This is a great new feature and one that I’ve wished for many times over the years. I’ve worked around its absence with various solutions that require more boilerplate code, and which provide worse performance. I’m looking forward to being able to replace that code because of the availability of constant literals.